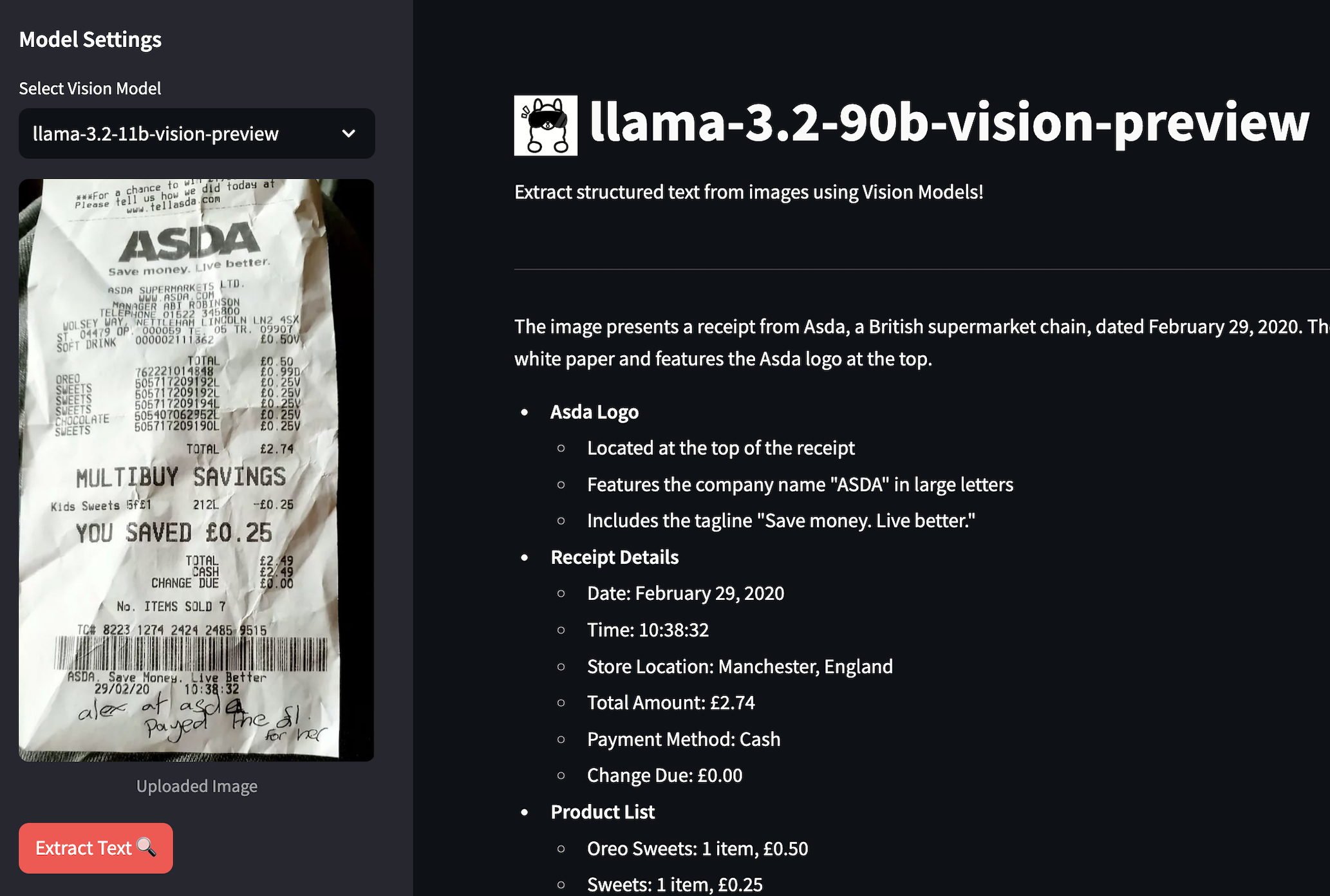

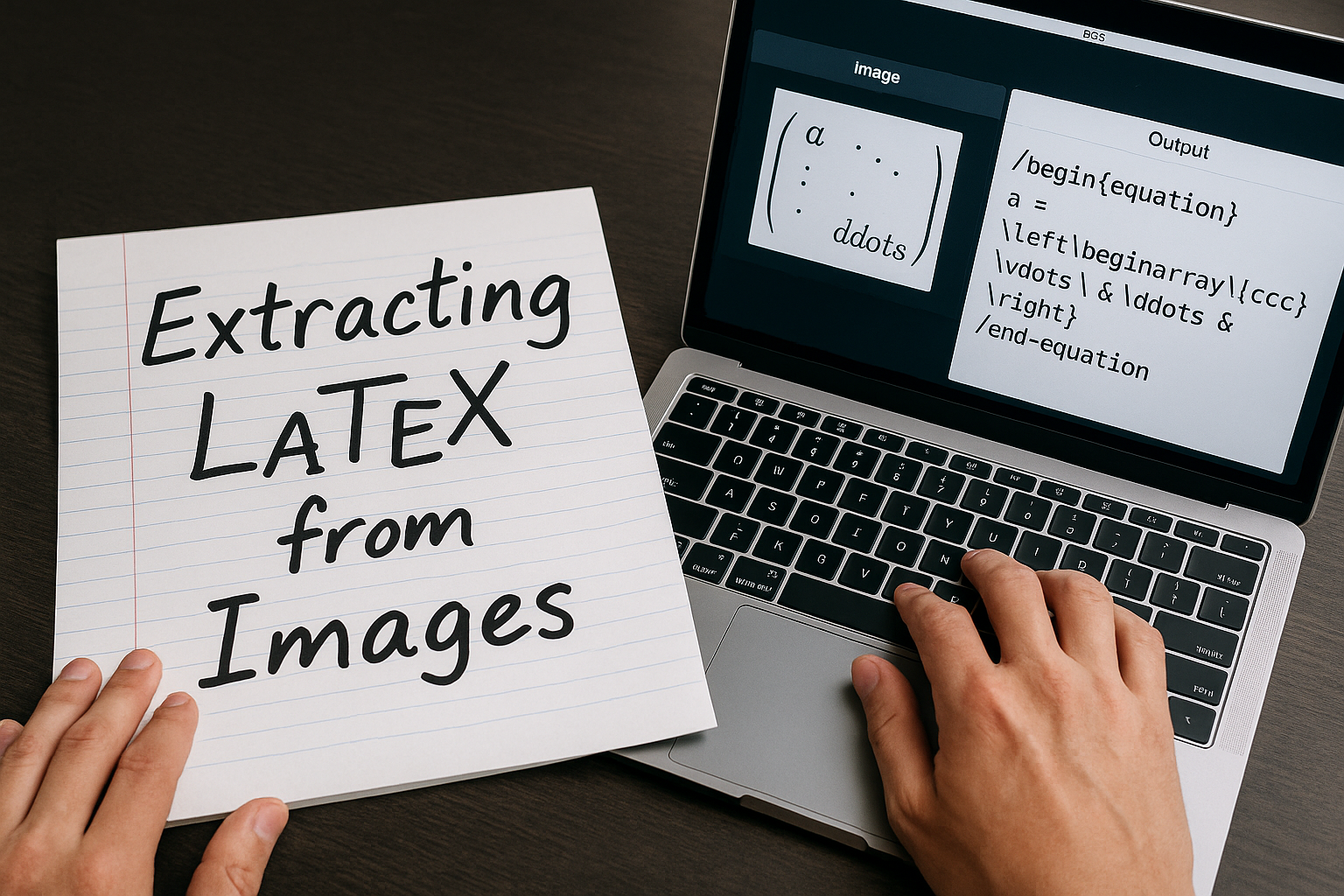

Ever tried to copy text from a handwritten note or a screenshot? Yeah, it’s a pain. That’s why I decided to build something cool using Meta’s new Llama 3.2 vision models. This little app can extract text from images - and yes, that includes your messy handwriting! Let me walk you through how I put this together and why it’s actually pretty awesome.

Why Llama 3.2 is a game-changer for OCR

Traditional OCR tools are… well, let’s just say they’re not great with anything beyond perfect printed text. But Llama 3.2 is different - it actually understands what it’s looking at.

I’ve been playing with both the 90B and 11B parameter versions. The 90B is obviously beefier and handles trickier stuff, but honestly, the 11B is pretty solid for most things too.

The stack

Brain power: Llama 3.2 vision models Speed boost: Groq API (seriously fast inference) Pretty face: Streamlit (because who wants to spend weeks on UI?) Image handling: Good ol’ Pillow Glue: Python & some base64 magic

The Code

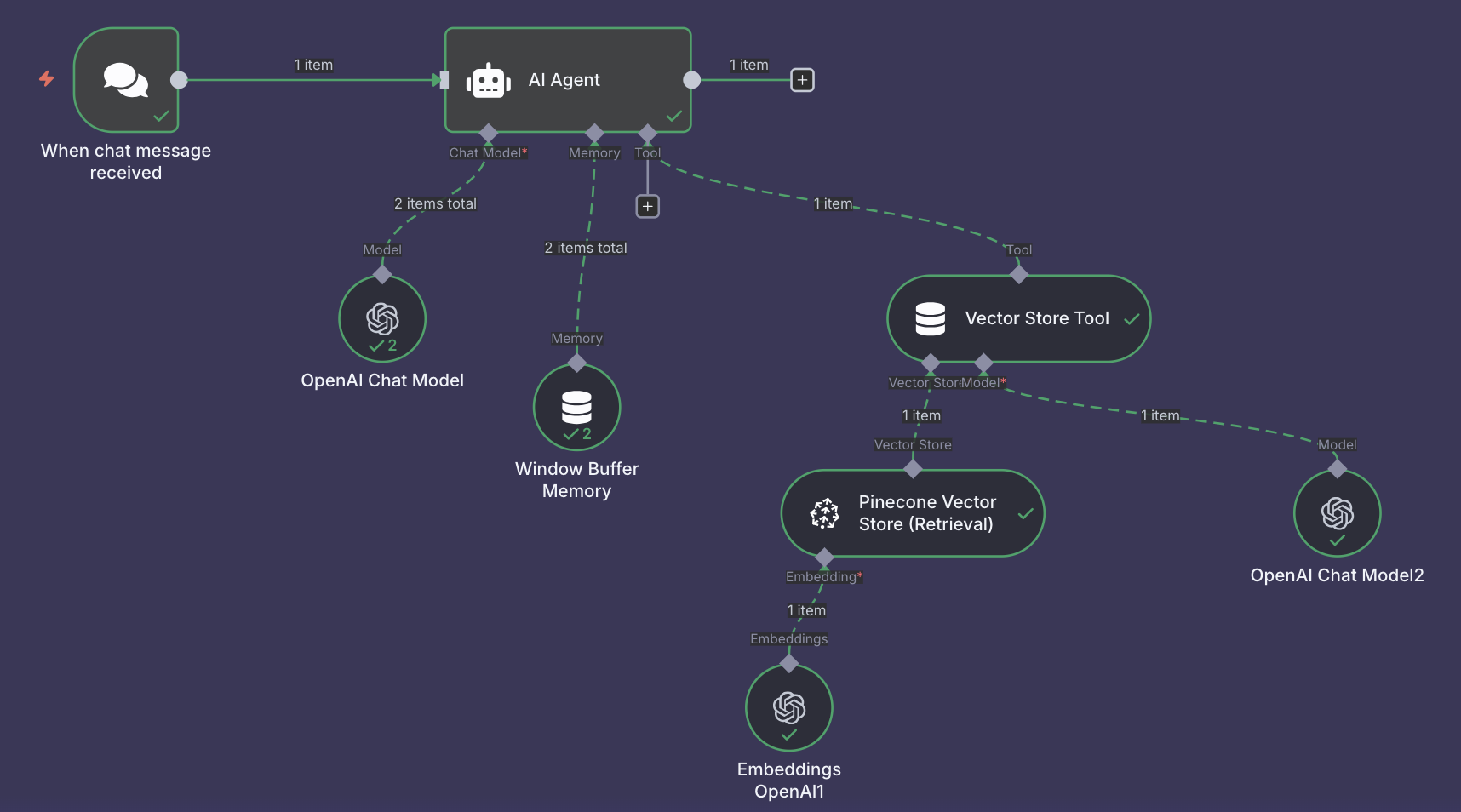

Start the conversation